Real-Time Data Engineering: Why It’s Critical for AI-Driven Businesses

Stay updated with us

Sign up for our newsletter

Walk into any modern AI discussion today, and one expectation stands out: speed is no longer optional. Models, dashboards, and decision systems are expected to respond instantly—not hours later, not even minutes later.

That’s why real-time data processing is no longer just an engineering upgrade. It’s becoming a business capability.

A decade ago, batch pipelines were enough. Data moved overnight, reports were reviewed the next morning, and decisions followed. But AI-driven businesses operate differently. Customer behavior changes in seconds. Fraud happens in milliseconds. Markets move continuously.

This is where streaming data pipelines and event-driven architecture come into focus—not as trends, but as necessities.

The Shift from Batch to Real-Time

Traditional pipelines were built around stability. Data was collected, stored, and processed in batches. This worked when decision cycles were slow.

But AI systems don’t wait.

- Recommendation engines need live user behavior

- Fraud detection requires immediate anomaly detection

- Supply chains depend on real-time signals

Batch pipelines introduce latency. And latency, in an AI-driven environment, translates directly into missed opportunities or increased risk.

That’s why organizations are investing in real-time analytics platforms that can process and act on data as it arrives.

Read More: AI-Native Enterprise: How Software Development, Architecture, and IT Operating Models Are Being Rewritten

What Real-Time Data Processing Actually Means

At a technical level, real-time data processing is about handling data as events occur, rather than after they accumulate.

This is typically powered by event-driven architecture, where:

- Systems emit events (user clicks, transactions, sensor data)

- These events are streamed through pipelines

- Downstream systems react instantly

Platforms like Apache Kafka have become foundational here. Kafka acts as the central nervous system for streaming data, allowing systems to publish and consume events in real time.

On top of Kafka, companies like Confluent have built enterprise-grade streaming ecosystems, making it easier to scale and manage these architectures.

Why Event-Driven Architecture Changes Everything

Event-driven systems don’t just process data faster—they change how systems are designed.

Instead of tightly coupled pipelines, you get loosely connected services that react to events independently. This creates:

- Scalability: Systems can process millions of events concurrently

- Flexibility: New consumers can be added without disrupting pipelines

- Resilience: Failures in one component don’t break the entire system

This is why real-time data processing using event-driven architecture is becoming the default for AI-first organizations.

It aligns perfectly with how modern applications behave: dynamic, distributed, and always on.

Streaming Data Pipelines for AI and LLM Applications

AI—and especially large language model (LLM) applications—depend heavily on fresh, contextual data.

Consider:

- Personalization engines updating recommendations in real time

- AI copilots adapting responses based on user interaction

- Fraud models continuously learning from transaction streams

These use cases rely on streaming data pipelines for LLM applications, where data flows continuously into feature stores and inference systems.

Without real-time pipelines:

- Models become stale

- Predictions lose relevance

- User experience degrades

This is why real-time data engineering is not just supporting AI—it’s enabling it.

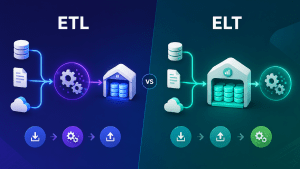

Read More: ETL vs ELT: What’s Right for Modern Data Pipelines?

The Business Impact: ROI of Real-Time Analytics Platforms

The value of real-time systems is not just technical—it’s measurable.

Organizations adopting real-time analytics platforms typically see impact across three areas:

1. Faster Decision-Making

Executives and systems act on live data instead of historical reports.

2. Improved Customer Experience

Real-time personalization drives engagement and retention.

3. Risk Reduction

Fraud detection, anomaly monitoring, and operational alerts happen instantly.

The ROI of real-time analytics platforms often comes down to this:

better decisions, made earlier.

In competitive markets, timing is advantage.

Where Real-Time Data Engineering Fits Best

Not every system needs to be real time. But in certain environments, it becomes critical.

High-Impact Use Cases:

- Financial services (fraud detection, trading systems)

- E-commerce (recommendations, pricing optimization)

- SaaS platforms (user behavior tracking, product analytics)

- Logistics (fleet tracking, route optimization)

In these scenarios, delays directly affect revenue, risk, or user experience.

Challenges You Can’t Ignore

Real-time systems are powerful, but they come with trade-offs.

Complexity

Streaming systems are harder to design and maintain than batch pipelines.

Data Consistency

Handling out-of-order events and duplicates requires careful engineering.

Cost

Always-on systems can increase infrastructure costs if not optimized.

Governance

Real-time data still needs validation, monitoring, and compliance controls.

This is why best real-time data processing platforms focus not just on speed, but also on reliability, observability, and governance.

Choosing the Right Real-Time Data Stack

If you’re evaluating your architecture, the decision isn’t just about adopting real time—it’s about where it makes sense.

Start with a few key questions:

- Do your use cases require instant decisions?

- Is latency affecting business outcomes?

- Can your current infrastructure support streaming workloads?

For many organizations, the answer leads to a hybrid approach:

- Batch pipelines for historical analysis

- Streaming pipelines for time-sensitive use cases

Platforms like Apache Kafka and Confluent often sit at the core of this hybrid model, bridging real-time and batch ecosystems.

The Bigger Shift: From Data Movement to Data Responsiveness

Real-time data engineering is not just about faster pipelines. It reflects a broader shift:

From moving data → to reacting to data.

This is what defines AI-driven enterprises. Systems are no longer passive—they are responsive, adaptive, and continuously learning.

A Practical Way Forward

If you’re planning to adopt real-time data processing, avoid trying to transform everything at once.

Instead:

- Start with high-impact use cases

- Build streaming pipelines incrementally

- Introduce event-driven architecture where it adds value

This reduces risk while delivering measurable outcomes early.

Conclusion

At some point, real-time data engineering stops being a technical upgrade and becomes a strategic advantage.

If your business depends on speed, personalization, or continuous intelligence, batch systems alone won’t be enough.

The goal isn’t to replace existing pipelines.

It’s to design systems that can respond as fast as the world they operate in.

FAQs

Is real-time data processing necessary for all businesses?

No. It’s most valuable where timing directly impacts outcomes, such as customer experience or risk management.

How do streaming data pipelines differ from traditional pipelines?

They process data continuously instead of in batches, enabling immediate insights and actions.

Are platforms like Kafka only for large enterprises?

Not anymore. Managed services from providers like Confluent have made streaming accessible to mid-sized organizations as well.

What’s the biggest mistake teams make?

Treating real-time as a technology upgrade instead of aligning it with business use cases.