ETL vs ELT: What’s Right for Modern Data Pipelines?

Stay updated with us

Sign up for our newsletter

Walk into any data platform discussion today, and the same shift keeps coming up: pipelines are no longer designed just to move data, they’re designed to keep up with it. Volume is up, formats are messy, and expectations are real-time. That’s why data pipeline architecture is being rethought across enterprises, not just modernised.

A decade ago, ETL was the default. You cleaned data before it touched your warehouse because storage and computing were expensive. That constraint shaped everything. Today, cloud systems flipped that equation. Storage is cheap. Compute is elastic. Suddenly, the question isn’t just how to process data, it’s when.

That’s where the ETL vs ELT discussion becomes real.

ETL Still Exists for a Reason

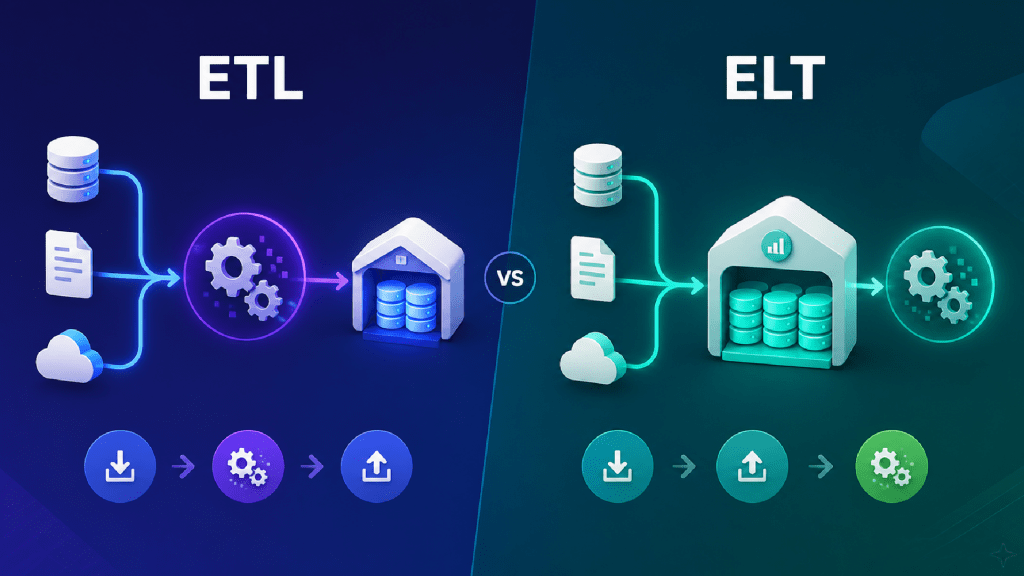

ETL-Extract, Transform, Load means you shape your data before it lands. You apply rules, enforce schemas, and validate everything upfront. By the time data reaches your warehouse, it’s already curated.

That discipline matters. In banking or healthcare, for example, you don’t want raw, inconsistent data entering downstream systems. You need auditability, traceability, and confidence in how a number was derived. ETL gives you that control.

It also keeps downstream systems clean. Analysts don’t have to guess which version of a dataset is correct; it’s already standardised. The trade-off is rigidity. Change the transformation logic, and you’re often reworking the pipeline itself. Scale the data volume, and ETL pipelines can start to feel heavy.

Read More: AI-Native Enterprise: How Software Development, Architecture, and IT Operating Models Are Being Rewritten

ELT Changed the Order

ELT- Extract, Load, Transform, flips that sequence. You ingest raw data first, then transform it inside the warehouse.

This only works because modern cloud platforms can handle it. That’s the real story behind ELT in cloud data engineering. Warehouses like Snowflake, BigQuery, or Redshift aren’t just storage; they’re compute engines. You push transformations into them instead of handling everything upstream.

The benefit is flexibility. Raw data stays available, and you can reprocess it when needed. Different teams can transform the same data in different ways without duplicating ingestion pipelines. That’s powerful in analytics environments.

But flexibility comes with responsibility. Without strong governance, you can end up with multiple versions of the truth.

Where the Real Differences Show Up

On paper, the difference between ETL and ELT is just the order of steps. In practice, it reshapes your architecture.

With ETL, transformation happens outside the warehouse. You rely on dedicated engines, and pipelines are tightly controlled. Data arrives curated and ready for use.

With ELT, the warehouse becomes the transformation layer. Pipelines are simpler, but the warehouse does more work.

The impact shows up in three areas. Scalability is stronger with ELT because you’re leveraging distributed cloud compute. Flexibility is higher because decisions can be deferred. Governance is stricter with ETL because consistency is enforced early.

This is why asking ETL vs ELT, which is better for enterprises without context, doesn’t help. It depends on what you value more: control or adaptability.

Where Each Approach Actually Fits

If your environment is compliance-heavy, ETL still wins. Banks, healthcare systems, and insurance platforms operate under strict regulatory requirements. Data must be structured, validated, and traceable before it is used. ETL aligns well with that need.

Now look at e-commerce or SaaS companies. Their priority is speed and iteration. Customer behaviour changes quickly, and teams need fast feedback loops. ELT supports this by allowing large-scale data processing and flexible transformations.

That’s the reality of choosing between ETL and ELT for data pipelines. It’s not about which is newer, it’s about which fits the problem.

Most mature organisations end up using both.

Why Cloud Is Pushing ELT Forward

Cloud didn’t just make ELT possible; it made it practical.

When compute and storage scale independently, there’s less reason to transform data before loading it. You can ingest everything, then decide how to use it later. This supports real-time analytics, machine learning pipelines, and exploratory workflows. Teams can adapt without rebuilding ingestion pipelines every time requirements change.

That’s why ELT in cloud data engineering is becoming the default approach in many modern systems.

Read more: AI-Driven SDLC: How AI is Transforming Every Phase of Software Development

Tools Reflect the Same Divide

An ETL tools comparison usually highlights platforms built for transformation-heavy workflows. Informatica is a classic example. It’s designed for structured, governed pipelines where transformation logic is central.

On the ELT side, tools like Fivetran focus on ingestion. They move data efficiently into cloud warehouses and rely on those systems for transformation.

Neither approach is better in isolation. They reflect different architectural choices. This is why conversations around enterprise data integration tools, ETL vs ELT, should start with system design, not tooling.

A Practical Way to Decide

If you’re figuring out how to choose an ETL or ELT platform, start with your constraints rather than your tools.

Ask yourself: how strict are your compliance requirements? Do you need access to raw data later? Are you optimising for control or speed? Is your infrastructure already cloud-native?

If compliance and consistency dominate, ETL is the safer path. If scale and flexibility matter more, ELT will likely be a better fit.

In many cases, choosing between ETL and ELT for data pipelines leads to a hybrid model. That’s not a compromise; it’s often the most practical design.

Conclusion

At some point, the ETL vs ELT debate stops being about pipelines and becomes about how your organisation uses data.

If data is tightly controlled and rarely reinterpreted, ETL fits. If data is explored, reshaped, and reused constantly, ELT fits.

Most enterprises operate somewhere in between. The best systems do not choose one, they are designed to support both.

What Leaders Actually Ask

Is ELT replacing ETL?

No. It’s expanding the toolbox. ETL still makes sense where control and compliance are critical.

Can we run both in the same pipeline?

Yes. Many enterprises use ELT for ingestion and ETL for sensitive transformations.

When should we move to ELT?

When data volume increases, use cases diversify, and your platform can handle transformation workloads efficiently.

What’s the biggest mistake teams make?

Treating this as a tooling decision instead of an architectural one.